Emulating AWS S3

I was looking for an S3 virtual sandbox that I could spin up on my local machine for testing purposes. Sure, it’s easy enough to setup an Amazon AWS account and work with that, but I was after something portable that didn’t depend on having an internet connection.

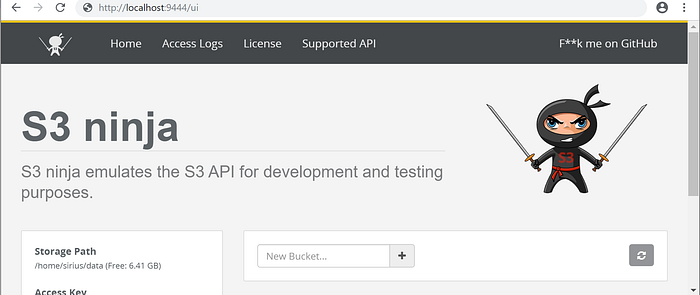

Digging around online, I came across S3 ninja.

I thought, why not? Let’s give this a lash and get it setup!

Installation Steps

The instructions (taken from the website) are shown below.

I had to make some slight modifications to get things working and I’ll go through them in the hope they’ll save somebody else some time.

I’m setup on Windows 10 running docker, using Powershell for my docker commands and I’ve also installed the AWS CLI for Windows using the Installer.

- Step (2) from the instructions above had to be modified to:

##sign into docker hub first

docker logindocker run -d -p 9444:9000 -v /mnt/hgfs/ninja:/home/sirius/data scireum/s3-ninja:6

The following mount --volume /mnt/hgfs/ninja:/home/sirius/data covers optional Step (5):/mnt/hgfs/ninja is the local directory I created outside the docker, and /home/sirius/data is the internal volume within the docker container.

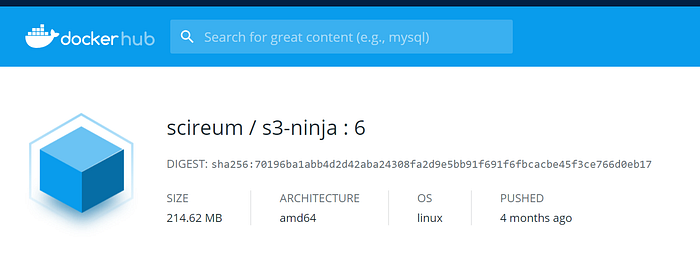

Attempting to pull

scireum/s3-ninjafailed so after looking at the docker hub version history, the tag was modified toscireum/s3-ninja:6The :6 is the latest tag that I found at dockerhub

- Once the docker container is up, you can check its status:

docker ps - You can get to the Web GUI using:

http://localhost:9444/ui

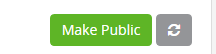

- Create buckets using the Web GUI. I created a bucket called :

medium-demo, once it has been created, choose the option “make public”

- If you do not change the bucket’s access permissions from “private” to “Make Public”, then you will need to provide the AWS 4 hash in the Authentication Header for any API calls you make. This information is taken from the Ninja Web GUI “Support API” section.

Basically all object methods are supported. However no ACLs are checked. If the bucket is public, everyone can access its contents. Otherwise a valid hash has to be provided as Authorization header. The hash will be checked as expected by amazon, but no multiline-headers are supported yet. (Multi-value headers are supported).

Using the AWS CLI with S3 ninja

- Configure the AWS CLI config file as follows (C:\Users\<username\.aws\config>)

[profile ninja]

aws_access_key_id = <access key>

aws_secret_access_key = <secret key>

region = http://localhost:9444/s3- The access key/secret key are listed at the Web GUI URL. You can also find them (and probably change them), by an interactive bash login to the container and looking at the

application.conffile.

docker exec -ti <container id> bash

cat /home/sirius/app/application.conf

....

....

....# AWS access key used for authentication checks

awsAccessKey = "<>"# AWS secret key used for authentication checks

awsSecretKey = "<>"

You should now be able to run AWS CLI commands

- Let’s copy a mocked up test file (

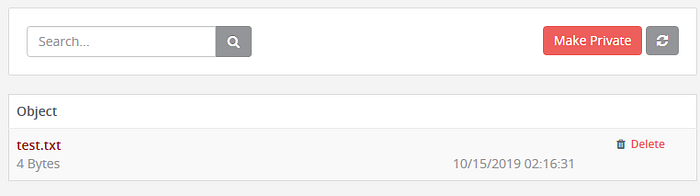

test.txt) over to our medium-demo bucket that was created earlier

PS C:\> aws --endpoint-url “http://localhost:9444/s3/" --profile ninja s3 cp test.txt s3://medium-demo/test.txt

upload: .\test.txt to s3://medium-demo/test.txt- Check to see if it has been loaded by issuing an

lsof the bucket

PS C:\> aws --endpoint-url “http://localhost:9444/s3/" --profile ninja s3 ls s3://medium-demo2019–10–15 11:16:31 4 test.txt

- We can also check using the Web GUI to verify it has been uploaded